- On Monday at WWDC, Apple skillfully announced how much work it was doing on the latest AI and machine learning technologies.

- Unlike most AI-powered tech companies, Apple does cutting-edge processing on its devices rather than relying on the cloud.

- Rather than talking about AI models and technologies, focusing on an Apple product means that it usually just shows off new features that are quietly enabled by AI behind the scenes.

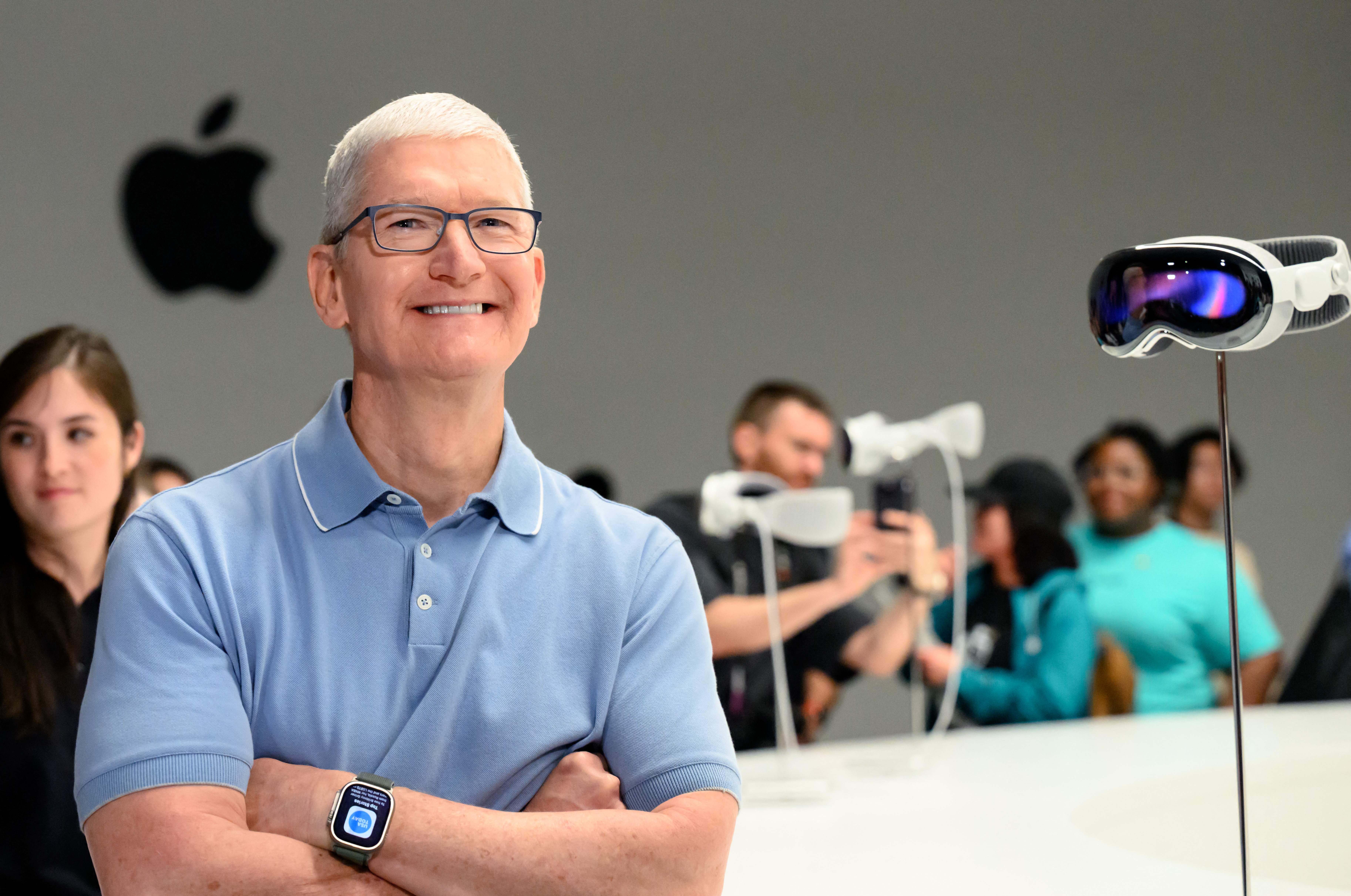

Apple Park is seen ahead of the Worldwide Developers Conference (WWDC) in Cupertino, California, on June 5, 2023.

Josh Adelson | AFP | Getty Images

On Monday during Apple’s annual WWDC developer conference, the company revealed how much work it was doing on the latest AI and machine learning technologies.

As Microsoft, Google, and startups like OpenAI embraced cutting-edge machine learning technologies like chatbots and generative AI, Apple seemed to sit on the sidelines.

But on Monday, Apple announced several significant AI features, including iPhone autocorrect based on machine learning software using an adapter language model, the same technology that underlies ChatGPT. Apple said it will even learn from how the user does scripts and types to improve.

Craig Federighi, Apple’s chief software officer, joked about AutoCorrect’s tendency to use the nonsensical word “ducking” to replace a common expletive.

Monday’s biggest news was its cool new augmented reality headset, the Vision Pro, but Apple nonetheless showed how it works and is interested in developments in the latest in machine learning and artificial intelligence technology. OpenAI’s ChatGPT may have reached more than 100 million users in two months when it launched last year, but Apple is now taking the technology to improve on a feature that a billion iPhone owners use every day.

Unlike its competitors, who build larger models with server farms, supercomputers, and terabytes of data, Apple wants AI models on its devices. The new autocorrect feature is particularly impressive because it works on the iPhone, while models like ChatGPT require hundreds of expensive GPUs working in tandem.

On-device AI bypasses many of the data privacy issues faced by cloud-based AI. When a model can be run on a phone, Apple needs to collect less data in order to run it.

It’s also closely related to Apple’s control of its hardware portfolio, right down to its silicon chips. Apple builds new AI circuits and GPUs into its chips every year, and its control over the overall architecture allows it to adapt to changes and new technologies.

Apple doesn’t like to talk about “artificial intelligence” – they prefer the academic phrase “machine learning” or simply talk about the advantage the technology enables.

Some of the other leading AI companies have leaders from academic backgrounds. This has resulted in you focusing on showing your work, explaining how it can improve in the future, and documenting it so that others can study and build on it.

Apple is a product company, and it’s been very secretive for decades. Rather than talking about the specific AI model, the training data, or how it might improve in the future, Apple simply mentions the feature and says there’s great technology working behind the scenes.

One such example on Monday is an AirPods Pro improvement that automatically turns off noise cancellation when a user is engaged in a conversation. Apple has not framed it as a machine learning feature, but it is difficult to solve, and the solution is based on artificial intelligence models.

In one of the boldest features announced Monday, Apple’s new Digital Persona feature scans a user’s face and body in 3D, and can then virtually recreate what they look like while videoconferencing with other people while wearing a headset. Vision Pro.

Apple also mentioned several other new features that utilized the company’s skill in neural networks, such as the ability to select fields to fill in a PDF.

One of the biggest cheers of the afternoon in Cupertino was the machine learning feature that enables the iPhone to recognize your pet — versus other cats or dogs — and put all of a user’s pet photos into a folder.

“Analyst. Web buff. Wannabe beer trailblazer. Certified music expert. Zombie lover. Explorer. Pop culture fanatic.”

More Stories

It certainly looks like the PS5 Pro will be announced in the next few weeks.

Leaks reveal the alleged PS5 Pro name and design

Apple introduces AI-powered object removal in photos with latest iOS update